Since all conferences are as much a human adventure as a scientific one, there are a couple of thanks that I would like to address before switching the lights off.

Tuesday, August 31, 2010

End of part two

Since all conferences are as much a human adventure as a scientific one, there are a couple of thanks that I would like to address before switching the lights off.

Monday, August 9, 2010

The calm after the storm

Sunday, August 8, 2010

Very Last Summary

- Most Important Result: The Higgs exclusion limits from the Tevatron, of course. Anytime now we may get the answer to one of the most important question in particles physics. Not this time yet, but the thrill is on.

- Most Intriguing Result: the forward-backward asymmetry of top decays at CDF has been updated to $15 \pm 5$ percent and lingers 2 sigma away from the SM prediction of approximately 5 percent.

- Most Relieving Result: the poster from the HARP collaboration saying that the LSND anomaly was due to underestimated contamination of the beam with anti-electron neutrinos. If confirmed, that would solve the 10-years-long puzzle what went wrong in LSND.

- Best Presentation: Nicolas Sarkozy. Gee, this guy knows how to talk, especially when contrasted with mumblings physicists. What fervor, what mimics, what gestures (ok, forget the jokes).

- Best Presentation, seriously: Ben Kilminster, Higgs limits from the Tevatron. Maybe it's because when holding the remote he looks just like Colin Farrel in Bruges, or maybe because the presentation was clear, concise, and illuminating.

- Worst Presentation: summary of BSM searches. Unfortunately, good experimental talks are rare. The cardinal sins are too much material, overcrowded slides, superficialness, no attempt at explaining presented results or methodology, and misleading theoretical interpretation.

- Best Animation: the Planck satellite sweeping the sky while uncovering the temperature map. That was just lovely.

- Best Music: given the number of cell phones in the audience, the competition is always fierce in this category. But if what I heard on the first day was really Genesis' Firth of Fifth, that obviously trumps anything.

- Overall Impression: Even though and Paris is always worth a mass, the conference was pretty well organized, and I had fun at times, my opinion about the ICHEP series has not changed. Conferences with 1000+ participants are dinosaurs; more a brontosaurus rather than a T.Rex. Parallel sessions contain some interesting material, but the shortness of the talks and no time for discussions preclude any deeper insight. Plenary sessions on the other hand are typically hasty and overloaded summaries of what we already heard in the parallels. Alas, one needs an astereoid strike for dinosaurs to be replaced by more flexible mammals....so maybe see you again in 2 years, upside down ;-)

Wednesday, August 4, 2010

My Bet ? A Fourth Generation!

In short my question today is, on which signal or phenomenon should we place our chips if we were to bet that the standard model is finally going to break down ?

I have my own answer. But first, before I give it to you, I feel compelled to be extra careful in a couple of ways.

The first way is dictated by personal reasons: I want to state it here very clearly, because I often get fingered as a rumour-monger or overhyper these days. I do NOT believe that the standard model is breaking down any time soon. I have a feeling that we will have to live with it for a while longer. I do not believe in Supersymmetry at arm's reach or anywhere else, nor in other exotics signals that we might see with present-day machines.

(And, since I am going to talk about something like that in particular below: I do not believe we are going to discover a fourth generation of fermions any time soon; I believe the present 2-sigmaish excesses of CDF and DZERO searches for a new t' quark are not due to a signal. If you really want my opinion... they are due to a coherent underestimation of QCD backgrounds, whose root is the use of the same methodologies by the two experiments!)

The second statement consists in my disclaimer, which I will state today as follows:

"The opinions expressed in this article are those of the author, and they do not reflect in any way those of the institutions to which he is affiliated. These include the CDF and CMS collaborations, as well as the Italian Institute of Nuclear Physics."

The above disclaimer is directed in particular at science reporters and other information recyclers... Which should not mistake me for an official source of the experiments in which I work! Of course it is a insufficient shield, but at least nobody can say I have not been clear on the matter.

Okay, now I feel more free to discuss in enthusiastic terms what I think is the single most exciting and promising deviation from standard model predictions that we have in our hands at present: a tentative signals for a fourth generation quark!

Can Fourth-Generation Quarks Really Exist ?

I have kept my eyes open on searches for a new quark since 2008, when a CDF analysis showed some intriguing high-mass events and a vague deviation of data from backgrounds. (The post linked above is rather well written if you need some introduction to the physics!)

After CDF performed the same analysis with doubled statistics, again finding an excess of high-mass events, I thought things were really interesting and I said so here.

In the meantime, there was an enlightening paper which came out in the Cornell Arxiv. Titled "Four Statements About The Fourth Generation", and signed by distinguished theorists, it explained clearly that contrarily to what one might think (or read in the Review of Particle Properties, which makes several assumptions in order to state that a fourth generation is excluded by electroweak measurements), a fourth generation of fermions is not ruled out by experimental measurements, and might actually be useful to explain the amount of CP violation we observe in particle decays. I summarized the paper's highlights in another post which I think is worthwhile reading, if you are interested in the topic.

Well, now DZERO has published the results of a quite similar analysis, and it looks like they too see some excess in the same kinematical distributions that CDF used to search for a fourth-generation quark. Again, this effect can be easily understood in terms of background fluctuations or a mismodeling of the high-mass tail of some of the contributing processes. Yet, the coincidence of the two search results warrants some additional thoughts. So let me first of all show what DZERO has just made public.

The DZERO Search For Fourth-Generation Quarks

DZERO has published, in time for ICHEP 2010, a new search for up-type fourth-generation quarks decaying to W bosons and down-type quarks. In a nutshell, the search considers events of the "lepton plus jets" type: the same kind of events on which all the most precise measurements of top quark physics at the Tevatron are based.

In the lepton-plus-jet topology, top quarks are produced in pairs, decay to a W and a b-quark, and then one W yields two hadronic jets, while the other decays to an electron-neutrino or muon-neutrino pair. This results in one neutrino in the final state, which adds some complexity to the reconstruction of the kinematics (the neutrino is undetected, and only its momentum components transverse to the beam direction can be inferred); however the advantage of having one high-momentum lepton in the event instead of purely hadronic jets is a more than adequate payoff. The events thus must feature a lepton, significant missing energy, and four hadronic jets: backgrounds then are small; the largest is the production of a W boson plus hadronic jets.

When searching for a fourth-generation quark, DZERO does exactly the same thing as in top searches: they assume that the t' quark is produced in pairs, and that it decays 100% of the time into a W boson and a quark (not necessarily a b-quark). The final state is the same as that of top searches, save for the fact that the larger mass of the t' grants a slightly tighter cut on the energy of the leading jet, a device which further reduces backgrounds.

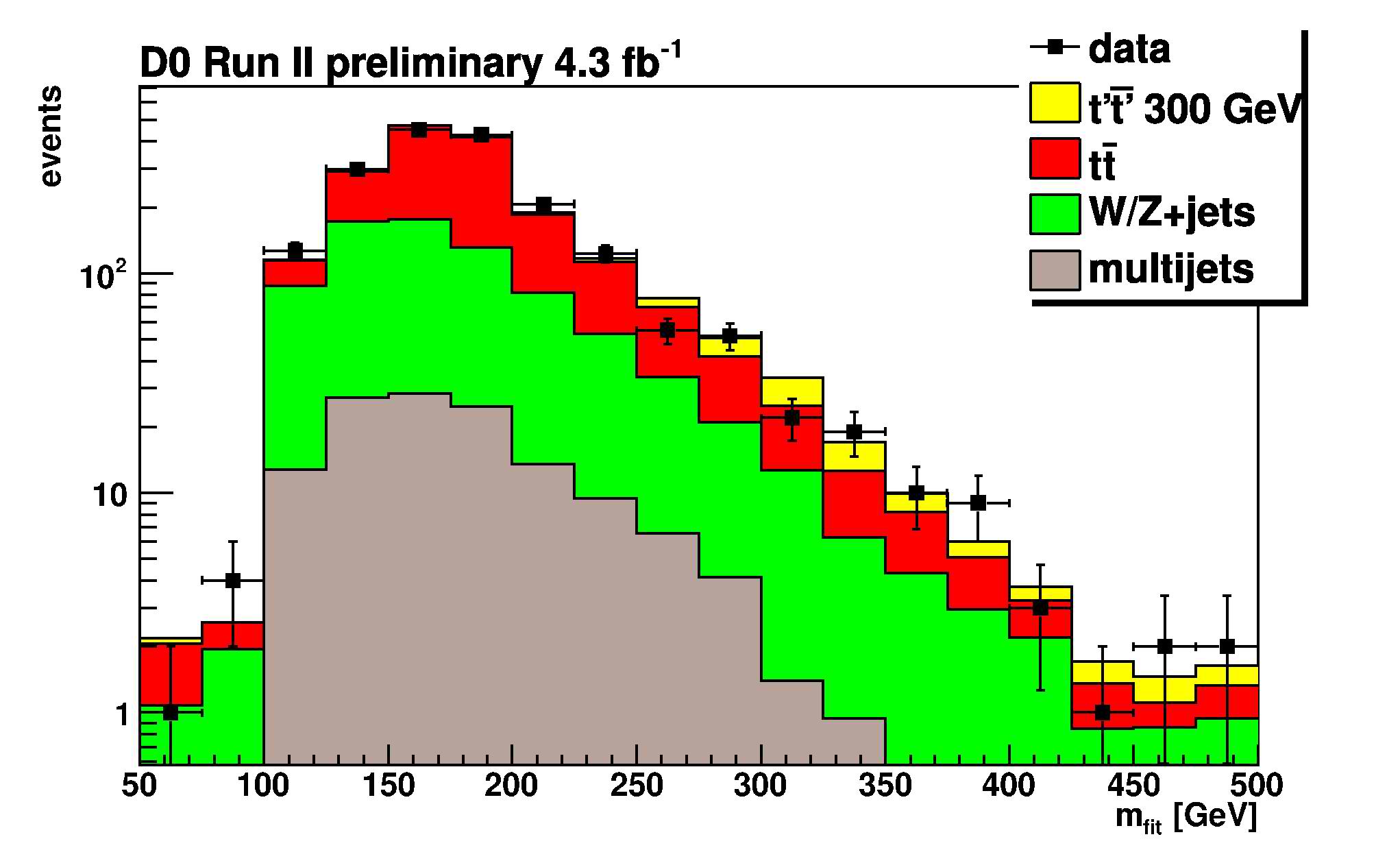

In the end, the data allow the reconstruction of a tentative t' mass, assuming that each event is of the t'-pair-production kind. A kinematic fit searches for the combination of jet assignments to the decay partons which best matches the hypothesized process. One thus obtains a histogram of reconstructed t' mass:

In the figure, you can see with different colours how the predicted amount of events coming from different processes (top pair production in red, W+jets production in green, and multi-jet production in grey) distribute in the reconstructed t' mass. The data is shown by black points with error bars, and it matches very well the predicted shape of backgrounds. An example of what contribution would be given by a 300-GeV t' quark in the histogram is shown in yellow. Tiny, but not entirely undetectable. Mind you: the vertical axis has a logarithmic scale!

What is maybe not so immediate to discern from the figure is the fact that while backgrounds have a wide distribution in the reconstructed t' mass, the signal of a t' quark if present would populate a narrower region: the one around the real mass of the quark. This is entirely the point of having constructed this kinematic variable -discriminating signal and background.

A second discriminating variable is the sum of all transverse energies of the observed final state objects: jets, lepton, and missing energy. This is the so-called "Ht". Ht is large for processes that involve the production of massive states, and so it is a good means to separate t' production from the top and W+jets background. Below you can see how the data compares to backgrounds as a function of Ht; the color coding is the same as above.

DZERO performs a fit in the two-dimensional plane of the t' mass and Ht to extract the possible amount of signal present in the data. This is performed as a function of the unknown value of t' mass: since the distributions of reconstructed mass and Ht of the signal depend on the true t' mass, several fits are performed in series, to extract a limit curve which depends on that parameter; the curve is investigated by points, at 25-GeV intervals in the unknown t' mass.

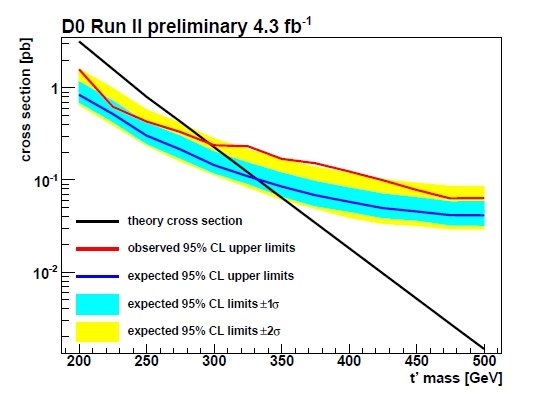

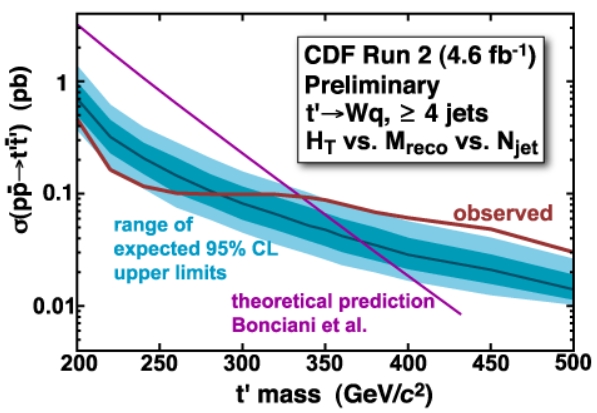

The result of the fits is displayed in the figure below. The t' mass (this time the "true" one, not the reconstructed tentative mass of the kinematic fits) is on the horizontal axis, and on the vertical axis is the production rate of the fourth-generation quark pair. The black line shows the theoretical prediction for the rate, which falls quickly as the t' mass increases: fewer events are expected in the 4.3 inverse femtobarn dataset of analyzed collisions as the t' mass increases, because the higher the mass, the more energy is required to produce the heavy quark.

The theoretical curve of the signal cross section can be compared with the red curve, which shows the upper limit (at 95% confidence level) extracted from the data. The red curve lies below the black one for low masses: a light t' quark (of masses below 296 GeV) is excluded by the data, because it would have been copiously produced in the Tevatron collisions, and would have stuck out in the two tested distributions. For higher mass values, the limit is above the curve: these mass values are still possible.

Now observe the blue and yellow band: these describe what rates of the searched quark DZERO expected to limit, as a function of t' mass, given the amount of analyzed data they had and the analysis strategy. The blue band shows 1-sigma variations in the expected limit, and the yellow band shows the range of 2-sigma variations. In practice, the bands pictorially explain what "on average" would result from the search, if no signal were present in the data.

Now, the red curve stays on the edge of the 2-sigma band for masses above 300 GeV. What this means is that DZERO has a slight excess of events which distribute like t' production ones in their data. Not awfully exciting, I'll admit. But now compare the curve to the one found by CDF just a few months ago (the analysis which I have discussed in detail here, as already mentioned):

CDF found a strikingly similar result! True, CDF had more sensitivity, so their limit is slightly better; but the behavior of CDF data and DZERO data is indeed quite similar. A fortuitous coincidence between two 2-sigma results ? That is surely a possibility; another one is that the two experiments, which rely on similar simulation tools, both underestimated the high-energy production of top or W+jets production events.

Yet a third possibility remains on the table: that both CDF and DZERO are seeing the first hint of pair production of a fourth-generation quark. The amount of data of the two experiments would be insufficient to see a clear signal yet, so the first hint is just that they both obtain a mass limit well below their expectations.

Now, suspend temporarily your disbelief and consider. If a 400-GeV t' quark exists, who is going to discover it first ? For sure CDF and DZERO with twice as much statistics (which they almost already have in their bags) would be likely to make those 2-sigma excesses become close to 3-sigma ones. Maybe adding other search channels would further increase their reach; but they would probably be unable to conclusively discover the quark.

Instead, on the other side of the Atlantic Ocean... CMS and ATLAS would be very fast in finding conclusive evidence for such a quark! The reason is that producing a 400-GeV t' quark at LHC is much, much easier, given the over 3.5 times higher energy of the LHC collisions. The cross section at the LHC is of several picobarns, which means that well before collecting an inverse femtobarn of collisions, the CERN experiments will find the new quark!

Now, let me say something personal, deep down this long post. I have always said that, despite I have been working more on the CMS experiment at CERN than on the CDF experiment at the Tevatron since 2008, my heart still beats stronger on the Tevatron side... That is still true in a sense: CDF is such a fantastic achievement for science that I will always be proud of having contributed to it for 18 years (and counting). But if you ask me which experiment I would prefer to see discovering a t' quark... I would say CMS!

The reason ? CMS and ATLAS deserve to become the focus of the next decade of high-energy physics research. Too much has been invested in human resources for these experiments to fall short of being a total success. I would love it if the adventure of the LHC experiments into the unknown were to start with a t' discovery, early next year! It would be just great!

... But now please go back and read my original disclaimer once more!

Tuesday, August 3, 2010

Things you see and things you don't see...

Of course what we didn't see was the Higgs. Many people thought we would (which meant we also saw more journalists than ever before at a physics conference), and now the next big question is: what's next? Will the Tevatron keep running for another three to four years? Will that mean it will see the Higgs? From what I hear, that's not a given, but it'll certainly be an exciting time.

Some people also saw the film Sunshine during the nuit des particules at the Grand Rex, and at the time time saw a lot of the actress Irene Jacob - that dress, and a story about balls of fire in a kitchen will go down in particle physics history.

Now it's time to see what's next - for me, that's the global Particle Physics Photo Walk next Saturday. More than 200 amateur photographers from around the world will get an exclusive look behind the scenes of five physics labs (KEK, CERN DESY, Fermilab, TRIUMF) and we are very much looking forward to see our labs through their eyes.

Monday, August 2, 2010

Summary of personal impressions

It was also pretty big (for a physics conference, not in the greater scheme of such things), in fact almost too big for my taste; perhaps it's just that as a theorist I'm naturally more introverted, but I find it difficult to meet people and start a conversation when there's a huge crowd. Smaller more focussed conferences are probably better for discussions; there was also a notable lack of questions in the plenary talks -- perhaps also a symptom of excessive size.

On the other hand, the huge size means a very diverse set of speakers, which enables one to learn about all the things that have recently gone on in the wider field. Since the arXiv is getting so vast that it is well-nigh impossible to even read the titles of all papers that get posted to the hep-* sections (much less the abstracts, to say nothing of the papers -- even assuming that one had the exceptionally broad knowledge base to be able to make sense of all of them), this overview is perhaps the most important function of a large conference like ICHEP.

And the things to be learnt were of great interest: CMS and ATLAS have "rediscovered" the Standard Model; that in itself is no surprise, but the speed at which the LHC experiments have managed to get there is amazing at least for this theorist. The arrival of the LHC hasn't rung the death-knell for the Tevatron quite yet, though: while rumours of a Higgs discovery turned out to have no foundation in fact (a 2σ deviation is hardly a basis even for a rumour), CDF and D0 combined could exclude a much larger mass region for the Higgs, further narrowing down the regions where it can hide. Also from the Tevatron comes the like-sign dimuon charge asymmetry that may be the first sign of new physics if it is confirmed by another experiment. Away from the big colliders, the neutrino physicists and cosmologists are also doing impressive work and chipping away at the Standard Model's plinth. The representation of my own field of research was perhaps not optimally suited to the audience, since the parallel sessions on lattice QCD were not very well-attended except by the lattice people and the plenary talk concentrated on work that would likely have enraptured a nuclear physics audience, but probably not a HEP one. Overall, I got the impression that the experimentalists take ICHEP much more serious as a forum than we theorists do -- there were a lot of new experimental results presented for the first time at ICHEP, whereas most of the theoretical results had been presented at other conferences or been posted on the arXiv earlier.

Blogging a conference as part of a group rather than on my own blog was an interesting new experience; for a lone blogger, ICHEP would have been way too big!

Saturday, July 31, 2010

Meanwhile in the South: CoGeNT dark matter excluded

Some time ago the CoGeNT experiment noted that the events observed in their detector are consistent with scattering of dark matter particles of mass 5-10 GeV. Although CoGeNT could not exclude that they are background, the dark matter interpretation was tantalizing because the same dark matter particle could also fit (with a bit of stretching) the DAMA modulation signal and the oxygen band excess from CRESST.

The possibility that dark matter particles could be so light caught experimenters with their trousers down. Most current experiments are designed to achieve the best sensitivity in the 100 GeV - 1 TeV ballpark, because of prejudices (weak scale supersymmetry) and some theoretical arguments (the WIMP miracle). In the low mass region the sensitivity of current techniques rapidly decreases, event though certain theoretical frameworks (e.g asymmetric dark matter) predict dark matter sitting at a few GeV. For example, experiments with xenon targets detect scintillation (S1) and ionization (S2) signals generated by particles scattering in a detector. Measuring both S1 and S2 ensure very good background rejection, however the scintillation signal is the main showstopper to lowering the detection threshold. Light dark matter particles can give only a tiny push to much heavier xenon atoms, and the experiment is able to collect only a few resulting scintillation photons, if any. Besides, the precise number of photons produced at low recoils (described by the notorious Leff parameter) is poorly known, and the subject is currently fiercely debated with knives, guns, and replies-to-comments-on-rebuttals.

It turns out that this debate may soon be obsolete. Peter Sorensen in his talk at IDM argues that

xenon experiments can be far more sensitive to light dark matter than previously thought. The idea is to drop the S1 discrimination, and use only the ionization signal. This allows one to lower the detection threshold down to ~1 keVr (it's a few times higher with S1) and gain sensitivity to light dark matter. Of course, dropping S1 also increases background. Nevertheless, thanks to self-shielding, the number of events in the center of the detector (blue triangles on the plot above) is small enough to allow for setting strong limits. Indeed, using just 12.5 day of aged Xenon10 data a preliminary analysis shows that one can improve on existing limits for the scattering cross section of a light dark matter particle:

xenon experiments can be far more sensitive to light dark matter than previously thought. The idea is to drop the S1 discrimination, and use only the ionization signal. This allows one to lower the detection threshold down to ~1 keVr (it's a few times higher with S1) and gain sensitivity to light dark matter. Of course, dropping S1 also increases background. Nevertheless, thanks to self-shielding, the number of events in the center of the detector (blue triangles on the plot above) is small enough to allow for setting strong limits. Indeed, using just 12.5 day of aged Xenon10 data a preliminary analysis shows that one can improve on existing limits for the scattering cross section of a light dark matter particle: Most interestingly, the region explaining the CoGENT signal (within red boundaries) seems by far excluded. Hopefully, the bigger and more powerful Xenon100 experiment will soon be able to set even more stringent limits. Unless, of course, they will find something...

Most interestingly, the region explaining the CoGENT signal (within red boundaries) seems by far excluded. Hopefully, the bigger and more powerful Xenon100 experiment will soon be able to set even more stringent limits. Unless, of course, they will find something...

Friday, July 30, 2010

Random collection of final impressions, and a tentative balance

Since this is probably my last entry in this blog, I'll entertain you with a random collection of final impressions, and maybe a tentative balance on the blogging experience itself.

The conference itself

Lot has already been said and written, so let's simply put it this way: the conference was excellent. Superb location (Paris is always Paris), excellent venue (I was just astonished the Palais de Congres doesn't provide wireless microphones in the smaller rooms, everything else was perfect), very efficient organization (thanks!), and an optimal balance of contents. Ok, the catering was less-then-perfect, but why should we indulge in complaining about the little details? :-)

The LHC has entered the game

Again, not a big news, but it's good to repeat it: we begin to see the first physics results from the LHC experiments! And even if this is not yet exciting new physics, those times are approaching fast: after more than 20 years of preparation, it's a nice sensation for the whole community.

Experiments vs theory

On the low side, I must say that I found the theory contributions in the first part of the conference a bit isolated. This is probably normal in the context of parallel sessions (and there were anyway good phenomenological contributions in the sessions more oriented to experiment), but as an experimentalist I probably missed the opportunity to learn something really new for me. For instance, I learned from Georg that:

the talks in the lattice session had actually been selected to be accessible and of interest also to people outside the lattice community (in particular there were a number of review talks), so it was a bit of a pity that the talks were attended almost exclusively by lattice theorists.I agree: pity! Maybe this should have been advertised more? The situation was of course different in second part of the conference, and I really appreciated some of the more theory-oriented talks in the plenary sessions.

"Sliduments" vs nice talks

The quality of the talks was in general rather good, and of course touched its best in the plenary sessions. I had anyway the impression that the non-LHC and non-Tevatron speakers gave the best talks in the parallel sessions. I have a theory, at least for the LHC talks. Nowadays we (the LHC experimental physicists) routinely use slides as a support for documentation of the daily work we are doing. Most of us have taken the (bad!) habit of packing them of all the information we want to record, information that should anyway go into a written report, sacrificing the graphical quality - and the effectiveness when used as a visual support for an oral presentation - in favor to an hybrid object that the experts in the field call slidument. Sure, it's possibly something easier to present: one can pretend to use the text on the slide as a reminder of what to say, maybe even avoiding to reharse. Well, the quality of this kind of presentation will definitively be worse, it's guaranteed, and - if they maybe can fit a weekly collaboration meeting - will certainly not meet the standard needed for a conference . Have a look at the slides of some of the presentations in plenary session of Wednesday, for instance the ones on dark matter or cosmology:

Almost no text, just the few word need to stress the concept, clear figure, no clutter. Sure, the speaker must now what to say on this slide! Now compare for instance with this one (taken from an ATLAS talk, so that nobody can say I'll try to blame our competitors only):

Almost no text, just the few word need to stress the concept, clear figure, no clutter. Sure, the speaker must now what to say on this slide! Now compare for instance with this one (taken from an ATLAS talk, so that nobody can say I'll try to blame our competitors only): No excuse, we have still a lot to learn!

No excuse, we have still a lot to learn!Blogging ICHEP 2010

I am still digesting the experience, and in this sense I'd really appreciate to get some feedback by the readers on this. On my side, I can say it has been interesting to blog a conference - it was a primer for me - and to do it in a collective blog, with different voices and styles.

Some of the feedback I go tell me that the blog has been appreciated outside, especially by the colleagues that were not attending the conference: apparently it helped to feel connected, more than the webcasts and slides only can do. It might also have helped the journalists reporting the conference to the media: a blog like this can certainly act a filter, and help the non-physicist to grasp what's important, what gets us excited, and why.

This (semi)official blog of the conference was an experiment, and in this respect the organizers wanted to keep a low profile, and verify on the field what the reactions would have been. It seems to me that, if in effect the community seems interested by the format, maybe next time something slightly more ambitious could be tried. For instance, with a bit more of organization we could have had some video interviews at the conference (someone did that, and did it very well indeed), a dedicated Twitter stream, and especially more visibility at the conference itself. I had in fact the impression that - at least at the beginning of the conference - a large part of the participants had no idea that this project was existing at all. And, since the most interesting and useful part of the blogging experience is the conversation with the readers, this could have been even more fun.

Anyway, I would probably do it again, should the occasion came. See you in two years in Melbourne?

The ICHEP Effect

I created a tool that watches how many plots DZERO, CDF, ATLAS, and CMS release as a function of time. Here are the results for this year (each little square is a plot):

I’m going to call that bump in July there the ICHEP effect.

Thursday, July 29, 2010

The CMS Momentum Scale And Resolution

The dull sound of the topic as stated above should not deceive you: this is a really exciting, interesting technology, which allows the measurement of physical quantities with high precision. Since the M in CMS stands for "muon", we certainly care for the precise measurement of muons -and muons are the particles used for the calibration procedure.

What happens when a charged particle leaves ionization deposits ("hits") in the silicon tracking system is that we can reconstruct its trajectory, forming a track. The track is curved in the plane transverse to the beam, because the S in "CMS" stands for "solenoid", a big cylinder that provides a B= 3.8 Tesla magnetic field within its volume. If you know what the Lorentz force is, you might also remember the formula P = 0.3 B R, expressing the proportionality of the momentum of a charged particle and its curvature in a magnetic field. This demands that within the CMS solenoid a P = 1.14 GeV muon follow a curved trajectory, which resembles a circle of radius R = 1 meter if observed in the "transverse" plane to the beam axis, the one along which the solenoid is symmetrical. By measuring the curvature, we determine the transverse momentum!

Things are always complicated if you want perfection. We of course can measure the position of the silicon hits with extreme accuracy, but alignment and positioning errors may create imperfections in the measurement of the track curvature. We also know the magnetic field with high accuracy, through Hall probes and other means, but imprecisions will affect the momentum measurement. Finally, the amount of material of which the tracking detector is composed affects the trajectory, producing further imprecisions if our map of the material is not perfect.

In the end, all the effects and all the details of the geometry of our detector are encoded in a carefully crafted simulation. With the simulation we can figure out what a 1-GeV track would look like, given our reconstruction and our assumptions about geometry, material, and magnetic field. But we need real data to verify that our model is correct, and to tune it in case it is not!

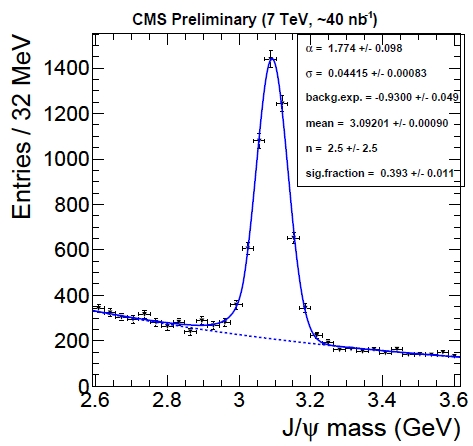

Real data: we now have it. CMS uses resonance decays to opposite-charge particles for this business: they are easy to identify, have little background, and there are plenty to play with. In particular, we use J/Psi meson decays to muon pairs for some of the checks of the momentum scale and resolution. Other dimuon resonances are also used -there is a large amount of such decays already available in the data so far collected- but here I will only discuss what CMS did with its J/Psi signal.

The dimuon mass spectrum in the vicinity of the nominal J/Psi mass value is shown in the picture below. A large number of signal events is observed. These events can be used to calibrate the momentum scale.

If one looks closely, one observes that the measured mass is very slightly lower than the nominal 3.097 GeV. This is already evidence for a very small underestimation of the momentum scale. To dig further, a simple thing one can do is to divide the J/Psi events depending on the value of the particle's reconstructed momentum or rapidity, measuring the mass in all sub-samples to check if in particular kinematical regions there is a bias. The bias, of course, would arise from the momentum reconstruction of the individual muons; but if one only measures the mass, which is a quantity constructed with the measurement of two muons, surely only an "average" bias can be detected, right ?

Wrong. Each muon from the decay of each J/Psi has a different momentum, travels through different parts of the detector, and is subjected to different reconstruction biases: we can turn these differences to our advantage. What we can do is to assume we know the functional form of these biases, and plug them into a likelihood function.

A further benefit with respect to methods I have seen in the past for the correction of scale biases is that a well-written likelihood function is also capable of extracting the momentum resolution from the same set of data. One just needs to produce a functional form (whose exact shape is suggested by simulation studies) that describes how the resolution on the momentum depends on the track kinematics; then, the likelihood fit will take care of finding the best parameters of the resolution function as well, by comparing the expected lineshape of the resonance with the mass value measured for each particle decay.

The likelihood is very complicated, because it accounts for the dependence of the mass on the muon momenta and the resolutions, and momenta and resolution in turn are functional forms of bias parameters. I know very well the code of this likelihood function, and I can tell you it is not for everybody! So I will abstain for once from finding a suitable analogy, lest I squeeze my brains for the rest of the evening. Let me just say that in the end, the likelihood maximization produces the most likely value of the parameters describing the bias functions, allowing a correction of the bias in the track momentum measurement!

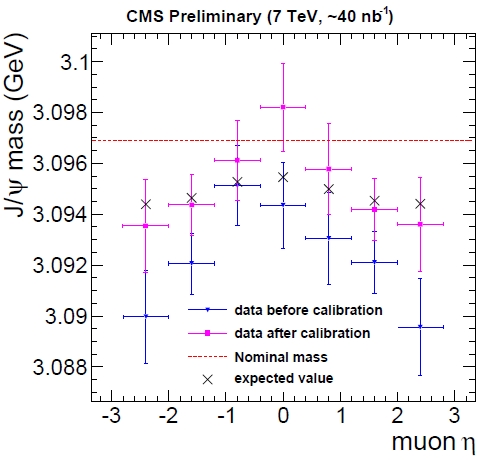

Maybe it is best to show a couple of figures. The first one below shows the average mass of the J/Psi meson as a function of the pseudorapidity of the muons from its decay. The hatched red line shows the true value of the J/Psi mass; but more meaningful are the crosses, which show what should be measured with a perfect detector, given the fitting procedure (which, I am bound to specify, assumes that the lineshape follows a Crystal Ball form). The crosses are our "target": if we measure a mass in agreement with them, given our fitting procedure to extract the mass, our momentum scale is perfect.

In blue you can see that the mass, before corrections, is biased low, especially at high rapidity. Instead, after the likelihood maximization and the correction procedure, we obtain the purple crosses. The agreement with the black crosses is still not perfect, and the statistics is too poor to detect further small deviations, but the demonstration of the validity of the procedure is clear!

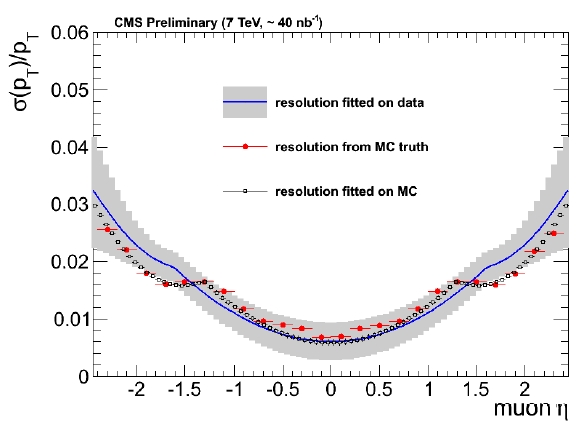

And then, the resolution. This is also a function of rapidity in CMS, due to the way the detector is built and the decay geometry. The figure below shows what resolution we expected to measure as a function of rapidity, from simulated J/Psi decays (in black), given the measurement method.

In red the figure also shows what the true resolution is, from simulated muons that are then compared to reconstructed ones. In blue, the band shows what instead CMS measured. The agreement between data and simulation is encouraging, and the result demonstrates the validity of the method. This functional form and its parameters are extracted from the way the reconstructed masses of J/Psi decays distribute around the nominal mass, accounting for the fact that muons in those events have different rapidity: the likelihood knows all the details, and produces a very complete answer to our question.

I think the method is very powerful and I cannot wait to see it applied to all resonances together, with more data -the different dimuon resonances have different kinematics and produce muons of widely varied momenta, allowing a very complete picture of the calibration and resolution of the CMS detector!

Wednesday, July 28, 2010

Final days, brief summary

Unfortunately, the lattice QCD talk did perhaps not make the best of the opportunity to convince a larger HEP audience of the importance of lattice results, since Yoshinobu Kuramashi chose to pass by the many important contributions that lattice QCD can make and has made towards flavour physics, and concentrated on the derivation of nuclear properties from lattice QCD instead. This is clearly a very important topic and replacing phenomenological models with true first-principles predictions from QCD will have an enormous impact on nuclear physics; the present audience of experimental high-energy particle physicists and Beyond-the-Standard-Model theorists might have been more excited to hear about lattice determinations of the decay constants and semileptonic form factors of heavy mesons, however.

The decays of B and Bs mesons are also the field in which the most exciting discrepancies between experimental results and Standard Model predictions keep appearing. While the Ds discrepancy of last year has disappeared in the meantime, there is now some tension in B meson decays, the most significant of which (at 3.2σ) is the like-sign dimuon charge asymmetry measured at the Tevatron. However, combining all results from D0 and CDF gives a discrepancy of less than 2σ when compared with the Standard Model, while all individual results are mutually compatible within errors. So perhaps this is again a fluctuation, or else something really weird is going on here. Phenomenologists may have a clearer picture of what that could be, see the post by Jester.

Today (i.e. Wednesday) started out with a session on neutrinos. Neutrinos have their own version of flavour physics -- neutrino oscillations. The MNS matrix, which is the leptonic analogue of the CKM matrix, is a lot less well-known than its hadronic cousin, however. In particular, it is not clear whether the mixing angle θ13 describing the mixing between the first and third generations is non-zero, although recent results indicate that it likely is. The flavour structure of the neutrino sector might be much richer than the quark one, though, since the existence of sterile neutrinos (i.e. neutrinos not partnered with a charged lepton via the weak interactions) cannot be ruled out at present.

The neutrinos detected at great effort by huge detectors can come from a variety of sources, some of which got their own talks: Alain Bellerive talked about solar neutrinos (i.e. the ones created by nuclear fusion in the Sun), where the Solar Neutrino Observatory (SNO) is now beginning to achieve precision measurements, which favour a scenario of large neutrino mixing angles. Tsuyoshi Nakaya presented long-baseline accelerator neutrino experiments (where neutrinos beams are created at an accelerator from the leptonic decays of a beam of charged particles), among whom OPERA has recently reported the first candidate for a ντ oscillation. Fabrice Piquemol spoke about reactor-based experiments (where the neutrinos come from the reactions in nuclear reactors) and single and double β decays; the tail of the energy distribution of the electrons produced in β decays can impose an upper limit on the mass of the electron neutrino (the limit of 2.3 eV does not seem terribly competitive with other limits, however), and the observation of neutrinoless (or "0ν") double β decay would establish that neutrinos are Majorana particles, indentical to their antiparticles.

Today's second session was about the links between particle physics and cosmology: Dark matter is one of the big "known unkowns" in our present understanding of the universe. What is known is that it cannot be normal (hadronic) matter because of limits imposed by Big Bang nucleosynthesis, and that it cannot be neutrinos, either. The best candidate would be a WIMP (i.e. a Weakly Interacting Massive Particle), such as the lightest stable superpartner of a Standard Model particle if SUSY exists in nature. The discovery of such a particle at the LHC would thus have significant impact also on cosmology.

Trouble with Flavor

Overall, the standard model frustratingly well explains the multitude of observed transitions between quarks and leptons of different generations. If we extend the standard model with generic non-renormalizable 4-fermion operators, their coefficients are extremely constrained by experiment. The scale suppressing certain flavor-violating operators has to be at least 100 TeV in the bs sector, at least 1000 TeV in the bd sector, and the astounding $10^5$ TeV in the ds (kaon) sector. It means that if any new particles exist at the TeV scale they better be very careful not to destroy the approximate flavor symmetries of the standard model, as otherwise they would generate effective 4-fermion operators with large coefficients.

That probably means that at the TeV scale there is no new particles beyond those of the standard model. There is still some hope, however, that the above is not true, and this forlorn hope is fueled by three results that are currently in tension with the standard model predictions. One is the widely discussed the D0 measurement of the same-sign dimuon asymmetry which points to new contributions to $B_s-\bar B_s$ meson mixing at 3.2 sigma level. The 2 other less widely known discrepancies are:

- Various determinations of the beta angle in the unitarity triangle (determined most

precisely from $B_d \to J/psi K_S$ decays, and from the $\epsilon_K$ parameter in the kaon mixing) do not agree very well. The current discrepancy is around 2.5 sigma.

precisely from $B_d \to J/psi K_S$ decays, and from the $\epsilon_K$ parameter in the kaon mixing) do not agree very well. The current discrepancy is around 2.5 sigma. - The branching fraction of the $B \to \tau \nu$ decay measured by BaBar and Belle is currently two times larger than the standard model prediction. Given the errors, the current discrepancy with the standard model is around 3 sigma.

Theorists have put up several models that may fit all up-to-date flavor observables and explain the existing anomalies. For Gino, the favorite model is the 2-Higgs doublet model. In this

scenario, the Higgs sector contains additional 4 scalar particles who can mediate flavor violating transitions. The point is that they do it in a very respectful way, including the suppression by small CKM angles and by small quark masses, so they not to produce excessively large effects even when the new Higgs particles are at the TeV scale. The quark mass suppression leads to the desired pattern where the smalest new contributions come in the kaon sector, while the largest occur in the Bs meson sector. This scenario also predicts new contributions to $B_s \to \mu \mu$ decay and to the neutron electric dipole moment at the level of the current sensitivity, so fresh tests of this idea are soon to come.

scenario, the Higgs sector contains additional 4 scalar particles who can mediate flavor violating transitions. The point is that they do it in a very respectful way, including the suppression by small CKM angles and by small quark masses, so they not to produce excessively large effects even when the new Higgs particles are at the TeV scale. The quark mass suppression leads to the desired pattern where the smalest new contributions come in the kaon sector, while the largest occur in the Bs meson sector. This scenario also predicts new contributions to $B_s \to \mu \mu$ decay and to the neutron electric dipole moment at the level of the current sensitivity, so fresh tests of this idea are soon to come.For more details, see the ICHEP'10 slides. As a bonus, a very accurate rendering of theorists waiting for hints of new physics in flavor physics.

How can there be no questions?

I’m sitting here, on the last day of ICHEP, listening to some excellent summary talks. One of the experimental neutrino talks just went by – excellent (sorry, no link because I don’t have internet as I write this). But there were no questions. I’ve noticed this for many of the talks at the plenary. Or if there is a question, it is the “if you doubled your dataset what would happen to that 2 sigma excess?”

How can this be? There are almost 1000 people watching these talks, many of them experts in the topics being discussed. And no questions? Physics is built on questions! This is how we learn – almost never is something so clear that we don’t need questions! Heck, the whole field is structured around this. We invite experts to our University to give a seminar so we can have their undivided attention for a whole day to ask them questions. We go to workshops so we can ask each other questions and learn. We write papers and then respond to the papers with letters and more papers which are basically a slow version of Q&A. So what is going on here?

I don’t know, of course, however I’m going to take a stab:

- Exhaustion. It is the end of a full week of conference. We’ve all be talking, discussing, and thinking about these topics for a solid week and we need a break for our brains to process everything.

- Embarrassment. There are 1000 people here. Many of them very important people in the field (i.e. they think you are stupid and your chances of funding go down). So you’d better make sure you have a good question before you ask it.

- These are summary talks. The speaker is often presenting a huge amount of information in a very short amount of time. It is likely they are an expert in the topic they are presenting, but given the volume of information discussed in such a short amount of time, it is not likely they know all the details. The people who are experts on each topic gave talks in the plenary sessions. Indeed, there were lots more questions there than there are in the plenary sessions.

I’m sure there are other possibilities as well.

Tuesday, July 27, 2010

Back to the future

But now, here goes! Saturday saw a big overview of all the exiting and less exciting future projects for the world of particle physics. I leave it up to your judgement to decide which is which, comments are welcome! I am also arranging them by my brain's personal sorting system and will happily accept comments and corrections - the list is probably not complete.

Particle physics is a global field. You just have to look around around the room to notice that people come from all over the place. The big machines that we work on these days are challenging and cost a lot of money so that no one country could afford to build and host them - all countries have to chip in and work together. The more challenging the technologies become the more this is the case, and it also takes many years for a machine to evolve from idea to design study to running accelerator. Consequently it may seem strange to the outside world that while we've only just switched on the LHC and are waiting for discoveries, we already plan the next generation – but we have to have a variety of options in the drawer that will enable us to make the best choice when results are there. And then of course there are physics topics that aren't covered by the LHC!

There are several ideas for 'LHC follow-ups'. Two different varieties of LHC upgrades exist - one for more luminosity and thus higher statistics and safer discoveries, one for higher energies. Whereas one, the luminosity upgrade, is virtually around the corner, the energy upgrade is an option that's far in the future (around 2030, according to Roger Bailey's talk from Saturday). The discoveries at the LHC will probably dictate whether the higher energies are more interesting, or whether the LHC could be transformed into a electron-proton machine, or a 'superHERA', although it goes by the name of LHeC in the session, with the e for electron. LHeC would collide the LHC's protons with electrons from a linear collider - an intriguing thought for someone working on the ILC! My imagination already went off into dreamscapes where LHeC and the linear collider would run together on different physics programmes as the best possible synergy of machines we have yet to see (and believe me, physicists are great at creating synergies and reusing existing machines!). I guess I'll have to talk to a few proper scientists to check whether this is imagination running wild or whether it's actually possible.

Certainly possible and the most likely next big project is a linear collider for electron-positron collisions. It'll complement the LHC and it's only a question of LHC discoveries, again, whether the ILC or CLIC is the machine of choice. While the ILC is basically ready to be built - the Technical Design Report is due in 2012, which means "Here's how we would build it", CLIC is a few years behind, with its Conceptual Design Report due next year. When you think that first collisions from a linear collider could be expected in the 2020s you start to understand why there is plenty of planning, designing and testing going on around the world!

Then there are b factories, machines that would complement and extend anything that the b physics experiments around the world, like the LHCb experiment at the LHC, find. One is proposed in Italy, and KEK in Japan has just started reconfiguring its KEKB accelerator into - can you guess its name? - SuperKEKB. Funding isn't final but they are planning get 40 times more luminosity. The Italian b factory would also be a light source, and it shares its multifunctionality with Fermilab's 'Project X', which can contribute to the ILC, to a possible muon collider and ultimately a possible neutrino factory. Fermilab is busy working on a plan for a muon accelerator program (i.e. a MAP), and muon colliders, though technologically still a big challenge, are also a big topic for machine physicists. Probably something called 'dielectric acceleration is, too, but I couldn't tell you as I didn't understand the talk, sorry....but when asked about whether there is a plan for a beam delivery system, the speaker laughed and said that he'd like to have this questions again in 10-15 years -- so I conclude it's not something that would pop up in the next months.

What I missed in almost all of the talks were good, catching, convincing arguments why these machines that were proposed are needed. I am sure there are solid physics cases for all of them, but surely it can't harm to state them again, clearly, understandably, in talks like these that will live on for a while? I'll go hunting for them for a future story in NewsLine, but first I go hunt particles in the Grand Rex, the nuit des particules -- see you there!

A Spectroscopist's Delight!

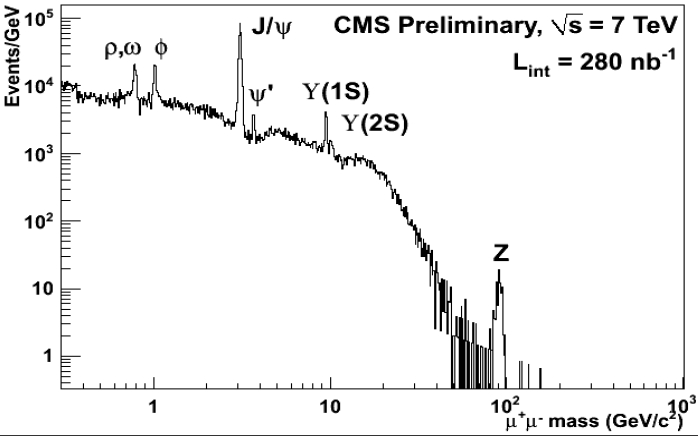

If you do not like the figure below, courtesy CMS Collaboration 2010, you are kindly requested to leave this blog and spend your time reading something else than fundamental physics. I do not know what will ever make you believe particle physics is beautiful, if not what is shown here.

The figure shows, using a logarithmic scale on both axes, the reconstructed mass of pairs of muon candidates of opposite charge, collected by CMS in its first 280 inverse nanobarns of 7-TeV proton-proton collisions collected until a week ago. Nothing fancy has been done to prettify this graph: these are honest-to-god muon pairs, as Nature (the bitch, not the magazine) has produced them in the core of CMS. True, the interecession of a detector and a reconstruction software were needed to go from ionization clouds to event counts; but this is the absolute minimum of manipulation you can ever expect from particle signals.

Now, what should enthuse you about the graph is the following. The distribution reveals, clearer than a million words could describe, the structure of all the most important bound states decaying by electroweak interactions into pairs of muons which we can produce in hadron collisions. We immediately spot the Z boson on the far right, and the towering peak of J/Psi mesons; but we also see Upsilon mesons, and at lower energy, we detect the ligher resonance decays of rhos, omegas, and phi mesons. What a spectroscopist's delight! This figure is tremendously informative! If we sent it to outer space, without labels or units, no intelligent race could ever mistake its meaning!

You also notice that these jewels stand atop a background of unidentified muon pairs. Muons can be produced singly by the weak decay of kaons and pions, for instance, or even more massive states like bottom and charm. Occasionally, pairs of muons of opposite charge can emerge that do not have the same parent: the frequent production of these uncorrelated pairs creates the significant backgrounds you see in the picture. Note, however, how these backgrounds die out for large dimuon masses: the Z boson is basically background-free, a fact I have noted in my previous posting here.

As these pages testify, CMS and ATLAS have presented scores of interesting physics results at ICHEP this week. None of those were groundbreaking ones; a few were significant advances, though, and many others were just meant to demonstrate that the experiments are ready for big challenges, such as discovering new physics, the Higgs, measuring the top mass better than the Tevatron, etcetera. The presented results took about a hundred man-years to produce, and I have a lot of respect for them -not to mention the fact that I did my little bit to contribute. But it is my humble opinion that the graph shown above could well be the one to single out and attach on the bulletin board of all the universities and institutes participating in the LHC experiments!

Paris est la France, et la France est Paris

While there are certainly good reasons to identify the French grandeur with the capital city, there is certainly more in France that just Paris. And I'm not only thinking about the food variety, that we experienced yesterday evening at the conference dinner where the excellent food specialties of five different French regions were served. Actually, that we should have experienced, and that were supposed to be served: in reality the food flow was definitively not enough to satisfy the appetite of the disappointed conference participants. But this is another story.

This valuable variety (of fine food, nice places, and of other excellent things) is rarely recognized by the Paris people - that's a fact - but it is was rather unfortunate to discover that this point of view seems to be shared by the French President himself. As you might have heard, Nicolas Sarkozy attended the ICHEP conference yesterday to give an "opening" talk (well, the conference was opened since four days, but still). Now, I really don't want to indulge in commenting the speech, nor I dare to discuss the subtleties of the French research politics. But I cannot refrain at least to note (at a very superficial level indeed) that both in the announcement of the President participation, and especially in the President speech, the French excellence in high energy physics seemed to be contained in circle of a 30 km radius centered on the Tour Eiffel.

I can tell you, this has definitively not pleased those ATLAS colleagues of mine working for instance in Annecy or Marseille, and I guess the other French physicist working for instance in Grenoble, or Clermont-Ferrand, or Strassbourg must not be that happy too. Oh, yes, the press office of the Elisee has finally changed the initial announcement, a now they are cited along with the other Paris university and research centers as "universités de province", but I'm still not sure they are at ease with the classification. And, despite the last-minute change on the web site, the President speech itself was still confined to the "region parisienne"! Are the Paris people really so distracted? I would tend to doubt it, but again, this would lead me to speculate about the current French research politics, and I'm certainly not qualified for this. Pity, anyway.

Physicists gone wild

(Here's a photo of physicists in the wild, during the calm before the food-induced storm.)

But the point of conference dinners isn't really food, but to talk to old and new colleagues, and that went off without a hitch. The food situation also provided a perfect ice-breaker for conversation, and I heard many a story about banquet disasters from conferences past.

Tonight will see a gathering of a very different sort in Paris: the Nuit des Particules, a particle-physics-themed evening for the public. The event at the Grand Rex theater in Paris starts off with a public lecture by scientist Michel Davier (in French), at which French actress Irene Jacob will also be present, and ends with a showing of the science fiction film Sunshine (in English).

Day 4: the Higgs is not there. Yet.

But we also know that, if it exists, we would not find it in the 158-175 GeV mass range. The saga of the Tevatron Higgs talks finally came to an end, certainly matching the expectation, at least for what concerns the show part. Well, as for the scientific part, after all the preliminary steps of the last days nobody was really any hint of signal anymore. We mortals might not be able to make fancy statistical combinations by eye, but it was still not too complicated to anticipate that, since there was no excess in any channel or single detector combination, we should not have expected any dramatic announcement.

I found Ben's talk really excellent: the slides (that, by the way, were kept secret until the very last moment) were very well prepared, and Ben proved to be an excellent presenter. In a sense he was even rather humble, especially if you think about the fuss about a possible signal before the conference (at slide 46: "I'm sorry, this 2 sigma excess is the closest we have to a discovery"!).

I found Ben's talk really excellent: the slides (that, by the way, were kept secret until the very last moment) were very well prepared, and Ben proved to be an excellent presenter. In a sense he was even rather humble, especially if you think about the fuss about a possible signal before the conference (at slide 46: "I'm sorry, this 2 sigma excess is the closest we have to a discovery"!).Still - but maybe it's just me- I had the feeling that the presentation contained a subtle innuendo. Of course Ben did not dare to say anything that was not scientifically backed, but take for instance slide 36. Ben gently dropped this CDF plot:

Monday, brief summary

A significant discrepancy between theory and experiment was, however, observed at the banquet: theory predicted a stable circulating beam of physicists scattering elastically off several targets representing the culinary traditions of the different French regions. Experimentally, a lot of pile-up events were observed, leading to early depletion of the targets and a failure to achieve saturation for many participants ... clearly, some more effort needs to go into the modelling of such high-density situations in culinary physics.

Monday, July 26, 2010

The present and future of the LHC

A fun way to browse ICHEP talks

One of my hobbies is better ways to visualize information with modern computers. Those of you that have followed me know about my efforts with DeepZoom. So, ICHEP is being rendered this way as we speak.

If you click on the link above your browser will load up a very large image using Sliverlight (which you’ll need to have installed: Windows and Mac computers only, I’m afraid). You’ll see about 400 talks all displayed at once. You can then use your mouse and mouse wheel to zoom in to see a particular talk. Your browser should load up the bits of the talk you are looking. You can click on a slide to bring it full screen and use the back and forward arrow keys to move around.

BUT: you need a decent computer, decent graphics card, and, above all, a decent internet connection. In short, no one at ICHEP can look at this display because the internet connection is not robust enough (I’d add a picture here but I’m at ICHEP and can’t load it up right now!).

The display is updated about once every 4 hours, so as the plenary talks continue they should slowly show up there in that display.

Enjoy!

Higgs still at large

Tevatron now excludes the standard model Higgs for masses between 156 and 175 GeV. The exclusion window widened considerably since the last combination. Together with the input from direct Higgs searches at LEP and from electroweak precision observables it means that Higgs is most likely hiding somewhere between 115 and 155 GeV (assuming Higgs exists and has standard model properties). We'll get you bastard, sooner or later.

Tevatron now excludes the standard model Higgs for masses between 156 and 175 GeV. The exclusion window widened considerably since the last combination. Together with the input from direct Higgs searches at LEP and from electroweak precision observables it means that Higgs is most likely hiding somewhere between 115 and 155 GeV (assuming Higgs exists and has standard model properties). We'll get you bastard, sooner or later.One interesting detail: Tevatron can now exclude a very light standard model Higgs, below 110 GeV. Just in LEP people missed it ;-) Hopefully, Tevatron will soon start tightening the window from the low mass side.

Another potentially interesting detail: there is some excess of events in the $b \bar b$ channel where a light Higgs could possibly show up. The distribution of the s/b likelihood variable (which is some inexplicably complicated function that mortals cannot interpret) has 5 events in one of the higher s/b bins, whereas only 0.8 expected. This cannot be readily interpreted as the standard model Higgs signal, as then one would also expect events at higher s/b where there is none. Most likely the excess is a fluke, or maybe some problem with background modeling. But it could also be an indication that something weird is going on that does not fit the standard model Higgs paradigm. Maybe upcoming Tevatron publications will provide more information.

A Big Day

I was going to post this before anything started this morning, however the ICHEP committee decided not to purchase wifi in the main conference hall, so it took until now for me to have time to post this….

Today is the first day of plenary talks at ICHEP. Up to now it has all been plenary sessions – and there have been a lot of talks. Now everything collapses into one room. And it is a very big room. I don’t think I’ve ever been in a room this big for a physics conference before.

Several things happen today – but two I’m particularly interested in seeing are the final combination of the Tevatron Higgs searches and a speech by the president of France, Sarkozy.

Sarkozy's speech was much better than I was expecting! He and his speech writers had worked together to craft a quite good message. One part was a vigorous defense of why it is a good idea to invest in science now even though there is a financial crisis on. Basically, his message boiled down to: a country can not ignore the future for the crisis of the present – there is always a crisis of the present. A message I wish more of the legislators in the state of Washington would get. The second half of his message was the details of the investments that France is making. You can’t always trust the numbers that politicians give you in settings like this, but one stuck out: a reinvestment in Saclay – one billion Euros in the next 10 years.

The second thing I’m looking forward to are the combined results from the Tevatron. You can guess what will happen when you look at the CDF and DZERO higgs talks (which I’d link to if there was any wireless at all here!). Both experiments are basically excluding the region around 160 GeV on their own, and both have a downward fluctuation at low mass.

Update from the Higgs talk:

Ben’s fashion choice: Tour de France yellow tee shirt along with a suit jacket. Nice!

High mass region: from 158 – 175 GeV is now excluded (about x4 bigger than before).

Low mass region: Starting to exclude the really low mass region as well now. Expected limit is around 1.2 or 1.4 or so. But the exclusion right now looks like a fluctuation low, so it will be very interesting to watch the next update 6 months from how. The expected is getting quite close to expected SM cross section! Sweet!

Sunday impressions

I was quite impressed by the diversity and level of the street music in Paris once again: at the Pont Neuf there was a man playing Handel's Music for the Royal Fireworks ... on a steel drum! Not exactly an orthodox instrumentation, but listening I thought il bravo Sassone would have been delighted by the adaptation. And just a little further, in the Châtelet métro station, there was a string septet playing Mozart.

Now for Monday and the presidential speech.

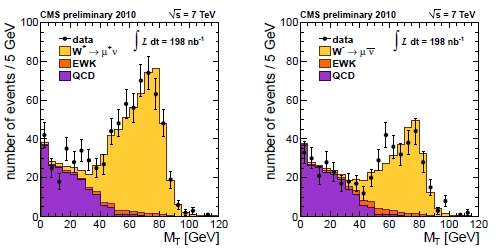

Electroweak Signals From CMS

Here I wish to assemble some of the electroweak physics results produced by CMS in time for ICHEP. The CMS experiment has shown results that use up to 280 inverse nanobarns of proton-proton collisions, but for electroweak measurements -those involving W and Z signals, to be clear- the statistics used is up to 200 inverse nanobarns of well-understood data.

It is exciting, and quite pleasing, to see how quickly the results have been produced. The speed at which a collaboration goes from raw data on tape to plots for conferences is in my opinion a quite important indicator of the confidence of the collaboration on the whole chain -detector, analysis tools, internal scrutiny. And CMS appears to pass this evaluation with flying colours!

So, W bosons are readily produced in proton-proton collisions, as is clear in the distributions below. These show the transverse mass of muon-neutrino systems, in events where a high-momentum muon has been detected, and where the calorimeter is used to measure the imbalance in the energy flow due to the escaped neutrino.

From a comparison of the left and the right panel you can see that LHC produces more positive W bosons than negative ones (the W contribution is the yellow histogram in both panels, turning on above 40 GeV of transverse mass). Violation of some basic symmetry rule ?? No, simply the result of the initial state containing more positive-charge quarks than negative-charge ones!

Please also note how clean the W signal is. These distributions will one day allow us to improve the already excellent precision in the mass of the W boson, plus to perform a host of other detailed studies.

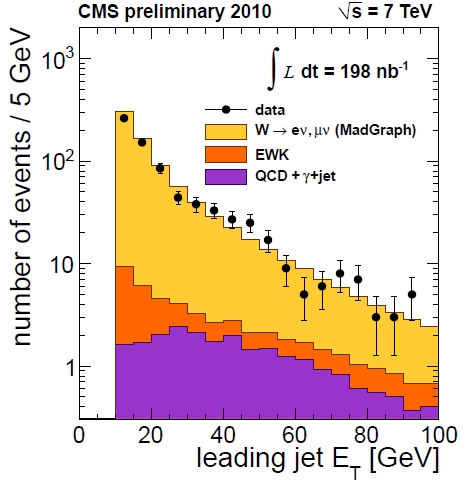

One thing we can do already now, however, is to study the energy of jets recoiling against the W boson. This can be seen in the plot attached below: the recoiling jet transverse energy follows closely the predictions of simulations.

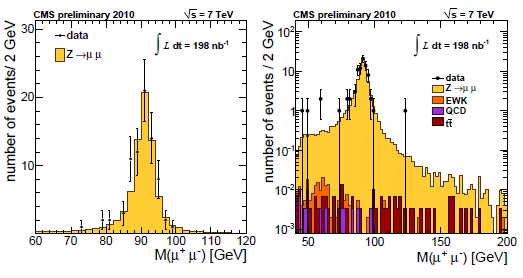

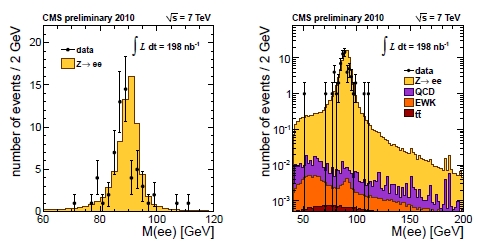

And what about Z bosons ? Well, of course they are less frequent -because of the smaller production rate, and because of the smaller branching fraction to electron and muon final states. Still, CMS produced significant signals already with 200 inverse nanobarns of data. Have a look at the dimuon mass, shown both in linear (left) and logarithmic scale (right) in the figure below.

What is significant in the plots is the extremely clean signal these decays provide: backgrounds are totally invisible in a linear scale, and they only appear in the log plot. At the Z mass peak, backgrounds appear to amount to less than a part per mille. Also worth noting is the very good resolution of the detector: the width of the Z boson mass distribution is close to that which the Z naturally has, due to its extremely short lifetime.

A similar signal is visible with electron-positron final states, as shown below:

Again, one notes the extremely clean nature of these events: the QCD background is mostly irrelevant. However, this is an inclusive selection: if one were to look for events with a Z boson and several jets, say, the QCD component would dramatically increase its relative importance. Such considerations will come into play when we search for new physics signals!

With the data shown above, CMS has measured the cross section of W and Z production in electron and muon final states at 7 TeV, as well as the ratio of W over Z production, a number which can be known with better accuracy than the absolute rate, due to the canceling of several systematic uncertainties. You can find all the measurements in the CMS public web pages. Here I will just flash one last figure, which amiably shows the increase of the production rate of vector bosons with the center-of-mass energy of the hadron collision.

Note that the blue lines showing the trend of cross section versus energy are broken: having proton versus antiproton or proton versus proton changes the production mechanisms, and thus the rate cannot be strictly compared with the Tevatron and UA1/2 measurements (on the left).

All in all, a rich bounty of measurements, already with 200 inverse nanobarns of data! I drool at the thought of what we will do with three orders of magnitude more data next year!